GitHub - judeleonard/Prescriber-ETL-data-pipeline: An End-to-End ETL data pipeline that leverages pyspark parallel processing to process about 25 million rows of data coming from a SaaS application using Apache Airflow as an orchestration

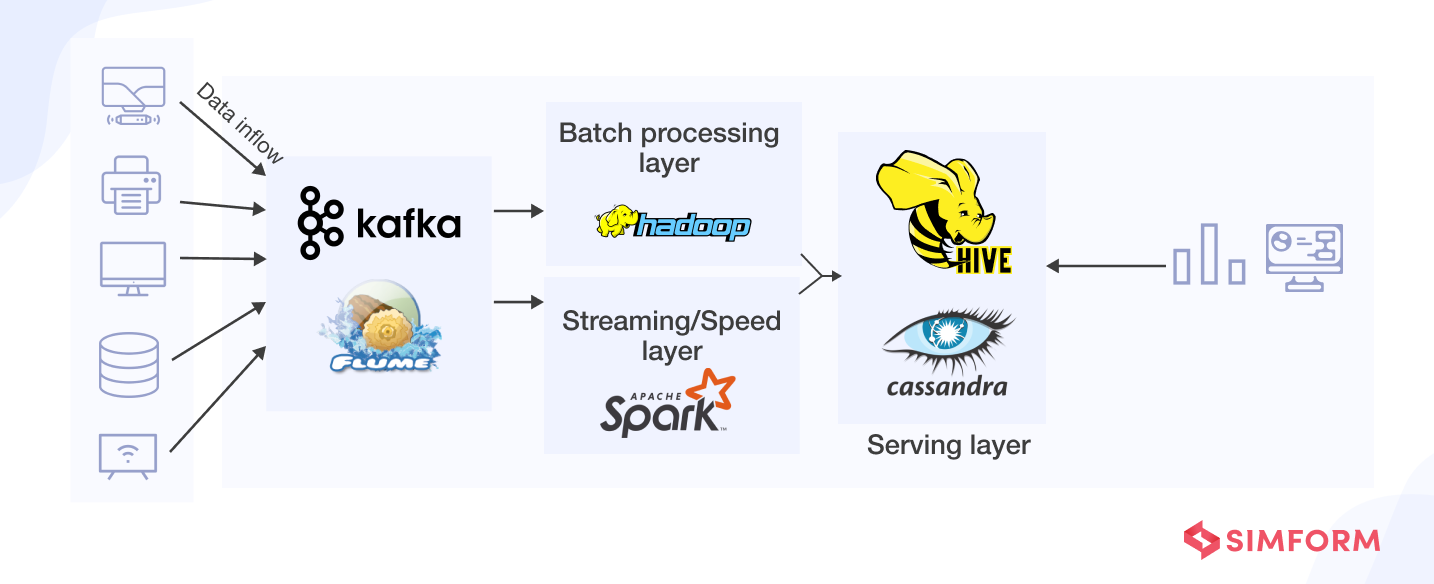

Streaming Data Pipeline to Transform, Store and Explore Healthcare Dataset With Apache Kafka API, Apache Spark, Apache Drill, JSON and MapR Database | HPE Developer Portal

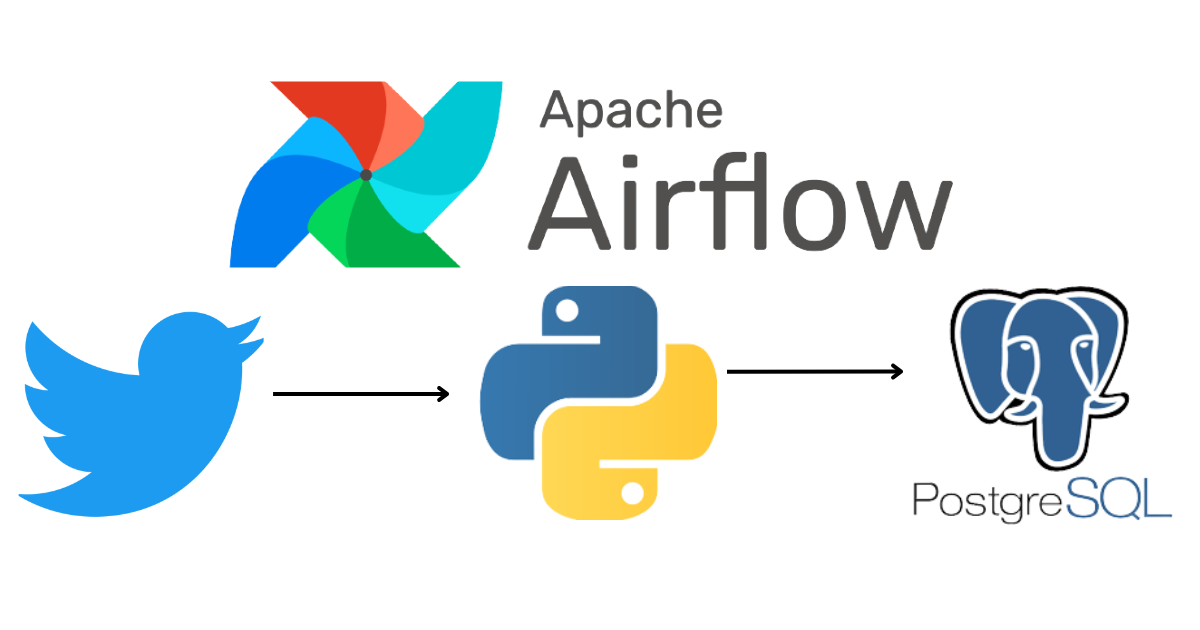

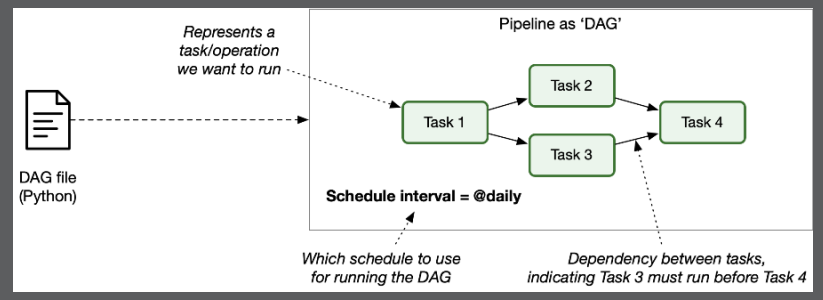

Apache Airflow(Open Source Tool for Data Pipelines) — Introduction and Installation | by Tushar Sharma | Medium

-3.png?width=650&name=Data%20Pipeline%20(V5)-3.png)